My blog has moved! Please visit the new blog at: https://blog.steveendow.com/ I will no longer be posting to Dynamics GP Land, and all new posts will be at https://blog.steveendow.com Thanks!

Friday, June 25, 2010

Parsing Addresses: Importing Unparsed Addresses Into Dynamics GP - Part 2

Then, finally, I came across exactly what I was looking for: An economical tool called RecogniContact, developed by LoquiSoft, that is specifically designed to perform full "contact" parsing. It can be integrated into a custom application or web site, and allows you to programmatically parse and validate address information.

In addition to parsing addresses, it can also parse contact data, such as prefix (Mr., Mrs.), middle initial, suffix, title, department, phone number, and e-mail address. It will even tell you the gender of the contact for over 20,000 first names. But wait, there's more! More, you say?

It uses international postal coding standards to intelligently parse addresses for 21 countries (currently US and Europe), parse addresses for another 21 countries based on address structure, and, as scary as this sounds, it can even process contact data in 13 different languages. It can parse international phone numbers based on country-specific standards, and it can even determine if an area code is valid for a given country. This actually thwarted my lazy test plans when I tried to use a fake phone number of (123) 456-7890. It detected the invalid number and, sigh, I had to update my data to use a valid area code.

In addition to this exhaustive list, another very valuable feature is its ability to parse the fields that it can successfully recognize, but then output the specific values that could not be recognized. This is actually a critical feature when parsing addresses, as it allows the user to skip past the contacts that imported fine, and makes it easier for them to specifically identify and manually review only the "partially" parsed addresses.

I corresponded with Werner Noska, the owner of LoquiSoft, and he explained that the tool started as a research project for an Austrian federal agency, which resulted in ContactCopy, a desktop address parser. Due to requests by companies looking to incorporate the technology into their software, he developed RecogniContact.

He explained some technical details about RecogniContact that were outside of my realm, such as structural parsing vs. content aware parsing, and its ability to recognize data elements even without separators. I know that software developers are sometimes "ego enhanced", and therefore occasionally looking to prove something with their L337 development skillZ, but if you have ever tried to write code from scratch to parse addresses, especially international addresses, trust me, you just can't compete with the knowledge, experience, and accuracy that is built into RecogniContact.

RecogniContact is available as a web service subscription, or as a licensed COM component that can be integrated with .NET or other COM aware development tools. I chose the COM option, since it was simpler for me to license for my client and their desktop customer import. With the sample .NET code provided by LoquiSoft, I was able to quickly develop a C# prototype, and in a few hours, I had RecogniContact fully integrated into my customer import.

Based on the limited sample data that I was given by the client (only 330 records), I have been able to consistently achieve an 80% "successful parse rate" (i.e. contact is fully parsed) without manipulating the raw data at all, which I think is pretty good. The majority of those successfully parsed addresses were in the US and Europe, which explains the high parse rate. But RecogniContact also did a decent job with some messy addresses, and was able to at least partially parse all of the international addresses. The 20% of the addresses that were not fully parsed were all partially parsed to some extent, and I was able to flag them for client review, so the results are far better than I could possibly hope to achieve writing my own code.

Here is one I thought was impressive. Even though the data includes the incorrect country, RecogniContact's knowledge of the UK postal codes allow it to recognize the country:

Original Data:

Neal Hutchins/19 Colomberie, St Helier/Jersey/Channel Islands/JE5 7SY/United States/neal.hutchins@telecom-solutions.com, Narissa.Atista@telecom-solutions.com

Parsed Data:

First: Neal

Last: Hutchins

Full Name: Neal Hutchins

Gender: M

Company: United States

Address1: 19 Colomberie, St Helier

City: Jersey/Channel Islands

Postal: JE5 7SY

Country: United Kingdom

Country ISO: GB

Email: neal.hutchins@telecom-solutions.com

Unrecognized Values: Narissa.Atista@telecom-solutions.com

In this example, there were two e-mail addresses, in which cases it parses the first, and considers the second email address unrecognized. And having "United States" in the data is odd, so it does its best and puts it in the company field. This was actually a common issue with the data I received, so I can easily detect the country, and if it is not US, I can quickly scan the other contact fields to see if United States is present and then remove it, or move the value to the Unrecognized Values property.

Even though it can't perform miracles with every address, RecogniContact's ability to return the unrecognized data values allows me to easily flag the customer records that need manual review and corrections, enabling the client to focus on the problems, and reducing the time required to review the imported customers.

One of the limitations of RecogniContact, and any parsing tool for that matter, is the ability to handle non-standard or ambiguous values. Things like unusual titles, mail stops, building numbers on a campus, or attention lines seemed to give it the most trouble, as these are often not standard postal address values. Here are a few examples:

A-105 Memphis, Y-11 Shasta Nagar, Lokhandwala, Complex, Andheri/Mumbai/400053/India

Stockton Network Services, Inc c/o First Fidelity Financial ATTN: Anita Woodman T10/060/110 Riverside Blvd/Jacksonville/FL/32204 904 884-6830 SVP - Finance & Controller Stan Parkman/United States Bob.Henson@fng.org

Both of these are just too random to parse 100%, but it usually gets at least 50% right, and doesn't take long for a human to correct the remaining fields.

Another aspect of my sample data that posed a challenge for RecogniContact was symbols, such as mixtures of colon, semi colon, ampersand, dash, comma and slash, all in the same address. This is understandable to me, as the tool needs some means of trying to identify field values, and mixed symbols in contact data pose quite a challenge, especially if they are embedded in a field value.

The Stockton example above is a good one. When I try and parse it, the "c/o", "ATTN:", and "T10/060" mail stop make it difficult to parse.

First: Bob

Last: Henson

Full Name: Bob Henson

Gender: M

Company: SVP - Finance & Controller Stan Parkman/United States

Address1: 060/110 Riverside Blvd

City: Jacksonville

State: FL

Postal: 32204

Country: United States

Country ISO: US

Phone1: +1 (904) 884-6830

Email: Bob.Henson@fng.org

Unrecognized Values: Stockton Network Services, Inc c/o First Fidelity Financial ATTN: Anita Woodman T10

But as you can see, it did a decent job of parsing a pretty difficult pile of data, getting most of it correct.

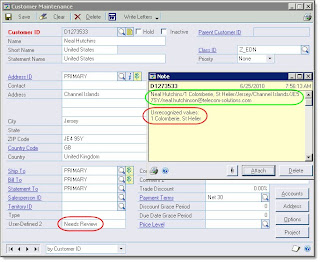

With all of that said, once you get the contact information in Dynamics GP, it's actually quite convenient to review the contacts that did not parse successfully. To make the manual review easier, I store both the original source data and the unrecognized values in the Customer Note. When reviewing the customer records, the user is able to open the Note window side-by-side with the customer window and edit the customer record. It works very well and is a simple process.

Another thing I do in Dynamics GP is flag customers whose information did not parse fully--I set the Customer User Defined Field 2 to "Needs Review". We then created a SmartList favorite that looks for this value, and the client then has a single place to see the customers that need manual review, and they can simply double click on each SmartList record to perform their review.

One final interesting issue that I found with two sample records were people's names that resembled street names. So "Thomas Way" was interpreted as a street instead of a name. I can't think of any way around that, and since it was very rare, it wasn't an issue so much as an interesting coincidence.

My customer import with the RecogniContact parsing component has been deployed at the client site, and they will begin testing it this week, so I'll soon see how well it works with fresh data and I am earger to find out how well it meets their needs.

Go forth and parse!

Thursday, June 24, 2010

Dynamics GP 2010 Developer Toolkit Documentation

I just went to PartnerSource to download the eConnect SDK, and the download page just references the GP 2010 DVD download page. But the GP 2010 RTM DVD does not seem to contain the dev tools.

And the GP 2010 DVD download page lists the eConnect SDK as a separate download, which, yes, points me back to the eConnect SDK download page. Look kids, there's Big Ben!

Since I was already there, I figured I would try and download the other GP 2010 SDKs, but the lovely circa-1990s Download Manager isn't launching when I click on the links. (well, it seems restarting IE fixed that)

Is somebody messing with the Matrix?

And leave it to Google to help me find what I can't get to on PartnerSource. For any other poor soul that has also gone in circles trying to find the SDK documentation, here is the public link to a few of the files.

http://www.microsoft.com/downloads/details.aspx?displaylang=en&FamilyID=8b8a61f6-cf86-40e4-b146-f4d8a6c11633

http://bit.ly/d2utwX

And just to warn you, the downloads are a bit odd. The "Developer Toolkit Help Files" download contains the following 3 CHM files:

- eConnectProgrammersGuide.chm (schema reference)

- DynamicsGPWebServiceRef.chm

- DynamicsSecurityWebServiceRef.chm

Whereas the "Toolkit Manuals" PDF download contains:

- eConnectProgrammersGuide.pdf (development reference)

- eConnectInstallAdminGuide.pdf

- VSTDGPProgrammersGuide.pdf

- WSInstallAdminGuide.pdf

- WSProgrammersGuide.pdf

Seems that it will be a while before all of the 2010 resources get organized.

Happy hunting.

Relevant? Schmelevant?

My friend's first response was to reassure me that I will personally remain relevant because I am good trainer, a knowledgeable resource, and Dynamics GP clients are not going to suddenly disappear. And to take it a step further, I am a curious individual who is continually adapting and looking for ways to increase my knowledge. All of this was a comforting ego boost, of course, but afterwards I began to wonder if I needed to be more intentional in my quest to stay relevant.

Right now, I tend to learn things as needed. A customer requests something, a challenge is presented, I try to force myself to continually take those things on-- to stretch outside my comfort zone. But how about those areas that don't come up? How about being more conscious when selecting areas to expand my knowledge? This, in turn, brought up many questions for me.

- How do you select technologies that are worthwhile, knowing that you will occasionally invest in those that do not lead anywhere?

- How do you go about gaining "valuable" knowledge in these areas? We can all read books, set up test servers, but how do you put yourself on a path where you will gain the practical knowledge to really excel?

- How do you gain those all-important first chances that allow you to apply the new knowledge in a safe but real-world scenario? Sometimes this is further limited if you may not encounter the opportunities normally-- if so, how do you cultivate them?

- And, last but not least, how do you do this at the same time you need to continue to perform optimally in your "day" job?

I don't have all of the answers, and would love to hear from those of you out there that have struggled and figured out an approach that works for you (or not, maybe). I know that my most valuable knowledge has come over time from a combination of books, practical experience, and failures (yes, you read that right) so it won't happen overnight. But my mid-year resolution is to find a way to be more methodical in my approach and increase my personal return on investment when it comes to knowledge.

Personally, one of the things I enjoy most about my job is the constant innovation and adaption. Never is there a dull moment (okay, maybe THAT is an exaggeration), and I find that I enjoy applying new found knowledge. So I don't want this to come across as a world-weary complaint, just the desire to find a way to better structure my own learning (ironic, huh, for a trainer?). My friend referred to this as "cultivating yourself as a business" which I think is an excellent mind frame to have even for those of us that are happily attached to partners and not flying solo.

As I tell many students, we all learn at our own pace and the secret is finding your own pace (and method) and being comfortable with that. Please share your thoughts, I would love to hear them.

Wednesday, June 23, 2010

Parsing Addresses: Importing Unparsed Addresses Into Dynamics GP - Part 1

The data in the AS400 was stored in free form text fields, such as ADDRESS1, ADDRESS2, ADDRESS3, NOTE1, NOTE2, etc. The system did not have separate fields for city, state, or zip, and there was no validation on any of the fields. Contact names and phone numbers were placed in different fields. And of course, there were alot of data errors, missing information, random notes, inconsistent formatting, and other oddities in the data that made a clean import nearly impossible.

At the time, we looked for tools to try and parse and validate the data, but we couldn't find any good, and economical, options. The client ultimately chose to develop their own routines to parse the data just enough to import it into Dynamics GP's separate address and contact fields. It wasn't pretty, but we got through it.

Fast forward to last month, when I was asked to develop an integration that would import customers from Excel. Pretty basic, except for some fun caveats: All of the address data will be in one column in Excel, and company names, contacts, and e-mail addresses are stored inconsistently in other columns. Oh, and did I mention that the customer information was international? Yup, the data included addresses from Michigan to Mongolia (literally, as in Ulaanbaatar). Once again, I was in address parsing purgatory. (Virgil, is that you???)

Here's one example of an actual customer address that I received (details modified slightly to protect the innocent):

Neal Hutchins/19 Colomberie, St Helier/Jersey/Channel Islands/JE5 7SY/United States/neal.hutchins@telecom-solutions.com, Narissa.Atista@telecom-solutions.com

Try parsing that bad boy. If you try hard enough, you'll find that the address is not in the United States, but is actually somewhere in the UK.

With this fun challenge, I did some fresh research to see if anything had changed in the world of address parsing. Given the nature of the problem, there is no single magical solution, but I learned of a few new options.

First, of course, were recommendations to try parse the data "manually" through code. In some cases, this may be feasible (i.e. US addresses only), but given the data that I needed to parse, I knew that I didn't have enough time to write an address parsing routine that would be particularly accurate.

The next recommendation was to utilize mapping web services to "geocode" the address information. This is a very good article and code sample on SQLServerCentral.com with a nice overview of the process, as well as a great code sample. While this is a very slick solution that might be a great option for certain situations, there were a few significant limitations for my project.

The first is that the geocoding option seems to only handle address information, not "contact" information, such as company name, contact, phone numbers, and e-mail addresses. The second issue is that the geocoding option does not seem to do a very good job of partially parsing an address. Many of my test addresses simply failed, and returned no results.

And finally, the deal killer, which is unfortunately not mentioned in the SQL Server Central article, is that the Google Terms of Service heavily restricts the use of the API for separate (non-map related) or commercial applications. I'm not a lawyer, so reading the terms of service didn't enlighten me much, but this is what others have informed me.

http://groups.google.com/group/google-contacts-api/browse_thread/thread/65fc032082d5e374

http://code.google.com/apis/maps/documentation/geocoding/#Limits

http://code.google.com/apis/maps/faq.html

So with those two options being ruled out, I started to look for other options that would specifically address my requirements.

I then learned that there are services that you can use to manually parse, scrub, and validate addresses. While potentially effective, these involve paying for a service (presumably expensive) and sending data in batches. I needed a real-time programmatic parsing and validation solution for my integration.

After more searching, I finally came across a solution. I'll discuss it in more detail in part two.

Tuesday, June 22, 2010

Using ORDER BY in a SQL Server 2005 or SQL Server 2008 View

With SQL Server 2000, ORDER BY clauses were 'sort of' honored, as you could use the TOP 100 PERCENT clause to pacify SQL 2000 and get your results in the requested order.

But with SQL Server 2005, the TOP 100 PERCENT clause no longer worked. SQL purists would claim that SQL Views should not be ordered, and technically, I can understand that argument.

But I don't care about those technical or purist arguments. I use SQL Server as a business tool, whose goal is to deliver data to business users and business owners in any form they want--not what is technically correct according to database engine developers. If business owners want the data upside down and backwards, I need tools that help me get the job done.

Anyway, I'm currently working on a view that will provide a list of inventory items. I won't be able to control how the client queries the view, so I can't control whether they add an ORDER BY clause on the view. If they use Excel to query the view, I can pretty much guarantee that they won't be manually customizing the query, so I have no way of knowing how the data will be sorted once it gets into Excel.

Anyone who has done reporting for an accountant knows that arbitrary, random, and unpredictable are not three of their favorite words. Therefore, I want to control how the data is sorted by the view, and know what my client is going to see when they query the view.

Looking into this issue after many years, I stumbled across this thread on the Microsoft SQL Server forum. One of the participants posted a very interesting variant of the TOP 100 PERCENT clause that apparently tricks SQL Server 2005 into honoring the ORDER BY clause in a view.

The trick is to use a TOP # statement with a specific, but very large number. He recommends just using the maximum integer value, which would be TOP 2147483647.

Sure enough, this works like a charm, and I can now ensure that the results of my SQL Server 2005 view are ordered any way that I would like (well, I'm still working on the upside down request).

There is a potential question of whether this approach impacts performance. For very large result sets or very complex queries, I suppose it could, but for Dynamics GP queries that are written properly, I very rarely have to worry about performance.

I just tested this technique with SQL Server 2008, and it appears to still work.

Go forth and sort!

Thursday, June 3, 2010

Multiple companies, multiple databases? Maybe?

I break it down to a variety of "tests" or points to consider when analyzing whether to have a database for each company or to combine several companies in to one database. Of course, combining companies in to one database is not incredibly common...but for a select set of customers, it really is the best solution.

Here is a cheat sheet I have put together that I use as a guide to the pros/cons and tests that I apply when helping a customer make a decision regarding the structure of their companies/databases.

Please feel free to share your own questions/tests that you apply to this decision as well! As always, thanks for reading :)

Tuesday, June 1, 2010

Customer Service

I was impressed. It was a good lesson in customer service.

Providing consulting services to customers requires that a firm provide some level of satisfactory customer service. And Dynamics GP implementation projects can produce some challenging situations that will require you to call on your customer service policies or procedures to ensure that your clients are satisfied.

But due to the nature of software implementations, it's not always quite as straightforward as sending off a new package and upgrading to next day shipping. Sometimes a client needs a module or third-party add on solution that wasn't identified during the sales and discovery process, meaning more money that they didn't anticipate spending. Often new business requirements are discovered during the implementation. Sometimes risks that were once considered immaterial become significant. Sometimes things take much longer than anticipated, causing budget overruns or missed deadlines.

How good are you, or your team, or your organization, at anticipating and/or avoiding these issues? When you do run into the issues, do you have well understood processes or procedures for handling them? And during that process, do you have mechanisms to make sure that the client is happy? (i.e. just because the problem gets "resolved" doesn't mean the client is happy)

If a client calls or e-mails your organization, do you have guidelines regarding response time? Do you measure or track how quickly you respond?

How do you assess when a problem is resolved? And then do you assess customer satisfaction after you have resolved an issue? Do you follow up with the client later?

If you are over budget on a project, do you ever offer a discount or non-billable time to complete items where your estimate or delivery may have contributed to the overrun?

I don't think it's easy to have answers to all of these questions, and it's certainly not easy to track everything consistently to measure and assess your organization's performance.

There are a lot of different ways of providing good customer service and keeping your customers satisfied, and there is no right answer, but it helps to at least have a conversation or narrative about how you or your organization strives to provide good customer service.

One habit of mine is responsiveness. I try and be as accessible and responsive as possible. A friend of mine jokes that if I don't respond to his e-mails in 5 minutes, he gets worried about me.

Several times I've been driving when I've received a call from a client needing urgent assistance. I've pulled into the nearest parking lot, fired up my laptop, started a GoToMeeting session, and started working with them on the issue. On my laptop. In my car. In front of my dentist's office. With a client in another state.

I can't always be available, but with a laptop and a Blackberry, I can usually respond to most inquiries the same day, or at least respond and let the customer know that I'll be working on their issue.

So what is your organization's story about how you provide good, excellent, or superior customer service?