Today was a very good day. After hours of effort by a team of three consultants, we solved a vexing Dynamics GP SQL performance issue for a client.

I'll start with the conclusion and explain why this was such a great achievement.

1. We actually identified the cause of the performance problem. For those that have tried to identify the cause of GP running slowly, locking up, or not responding, you know how difficult this can be. Working together, our team of 3 was able to conclusively track down the specific SQL stored procedure that was causing the problem. This was quite an accomplishment.

2. It took the combined effort of three consultants with three different areas of expertise and a lot of combined Dynamics GP, networking, and SQL Server experience to identify the cause. In my experience, it is very, very, rare to have the opportunity to assemble such a team to work on this type of issue, and it was a great experience for all of us.

3. The client was willing to invest in consultants and made the commitment to identify and resolve the issue. So often I've had situations where a client was plagued with performance issues, but there wan't a strong commitment to resolve them. The client will likely spend several thousand dollars resolving this particular issue, but I believe it was a worthwhile investment--or I will once we finally resolve the issue.

And with that, some background...

The Dynamics GP 2010 client contacted their partner in the San Francisco Bay Area a few months ago complaining about performance problems with Dynamics GP. GP is sporadically slow, and sometimes hangs or stops responding completely. The "lock up" can last from 5 minutes to 20 minutes and affects multiple users at different times.

The GP partner looks into it, but because the issue is so unpredictable and can't be reproduced on demand, no cause is identified in Dynamics GP or SQL. Apparently the problem continues and gets even worse. At this point, the client requests a network expert to try and determine if a network issue is causing the problem. The GP partner identifies a networking data communications consultant in Denver and flies him out to San Francisco this week.

After two days of network traffic monitoring and traces, the network consultant, working with the GP consultant, is able to capture the actual network packets that are occurring in the seconds leading up to the performance issues in Dynamics GP. Like a countdown, he is able to list the users, the actions they were performing in different applications, and the data in the network packets that were being issued by those users at the exact time that the problem occurred.

This data indicated that a revenue recognition application used by the client was issuing queries against the Dynamics GP database. He assembled the contents of the network packets, and saw that the "rev rec" application was querying the Extender "EXT" tables in Dynamics GP. When implementing Dynamics GP and the revenue recognition system, the client chose to store additional sales order invoice field values in Extender windows that could be used to perform rev rec calculations. So this all makes sense.

Since the network consultant had uncovered a pile of SQL, the Dynamics GP consultant called me to decipher the SQL activity and determine how it might relate to the performance issue. Coincidentally, my blog-mate Christina Phillips and I just presented last week at the Tech Airlift 2013 conference in Fargo, and the topic of our presentation was "SQL Skills for Consultants". While I am by no means a super-SQL-guru, I regularly get calls like this to solve some tricky problems, so I have had a fair amount of experience in this area.

So today I had a few GoToMeeting sessions with the GP consultant and network consultant where we pored over the SQL activity and dug into the Dynamics GP company database to understand what was going on.

We saw that the rev rec application issued a select statement against the EXT00100 table, and then called a custom stored procedure. Immediately after that stored procedure is called, Dynamics GP locks up for one user. A few seconds later, GP locks up for another user, and then another. So the network consultant had identified our culprit: a custom stored procedure.

After reviewing the network trace data, the GP consultant knew the specific actions that were being performed in the rev rec system and in Dynamics GP, so she was able to get the users to reproduce the issue. Suspecting that we had a locking issue on our hands, I sent her a slightly modified version of the "dynamic management view" query from this Stack Overflow thread that identifies blocked and blocking processes in SQL Server.

SELECT

t1.resource_type,

t1.resource_database_id,

t1.resource_associated_entity_id,

t1.request_mode,

t1.request_session_id,

s1.login_name,

s1.program_name,

s1.host_name,

s1.nt_user_name,

t2.blocking_session_id,

o1.name 'object name',

o1.type_desc 'object descr',

p1.partition_id 'partition id',

p1.rows 'partition/page rows',

a1.type_desc 'index descr',

a1.container_id 'index/page container_id'

FROM sys.dm_tran_locks as t1

LEFT OUTER JOIN sys.dm_os_waiting_tasks as t2

ON t1.lock_owner_address = t2.resource_address

LEFT OUTER JOIN sys.objects o1 on o1.object_id = t1.resource_associated_entity_id

LEFT OUTER JOIN sys.partitions p1 on p1.hobt_id = t1.resource_associated_entity_id

LEFT OUTER JOIN sys.allocation_units a1 on a1.allocation_unit_id = t1.resource_associated_entity_id

LEFT OUTER JOIN sys.dm_exec_sessions s1 ON s1.session_id = t1.request_session_id

WHERE o1.name IS NOT NULL

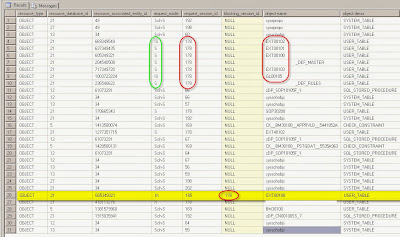

When she sent me the results of the query, it painted a great picture of the problem. Here is a screen shot of the query results--I know it looks busy, but stay with me, it's pretty simple to interpret.

Notice that the highlighted row is the only one with a value in the "blocking" column. That means that an application is trying to perform an operation on the EXT00100 table, but is being blocked by another process. Notice that the "request_mode" column on that row says "IX". This means that the row in yellow is waiting for an "exclusive" lock on the table, in this case because it is trying to update the EXT00100 table.

When a SQL process is blocked, it typically causes the calling application to wait--it will often just sit there and be completely unresponsive as it waits for the SQL command to finish. The SQL operation usually finishes in a fraction of a second, but if the process is blocked, it may wait for a minute, or perhaps indefinitely, until the operation completes.

The Dynamics GP consultant knew that particular yellow row was a Dynamics GP user--the user reported that GP was locked up. So this told us that something was locking the EXT00100 table, and that is why Dynamics GP was locking up--it was waiting indefinitely for the table to become available for an update.

Which leads us to the records for process 178. 178 is the process that is blocking the update to EXT00100. But why is it blocking? Well, if you look at the "request_mode" values, most have a value of "S", which means "shared lock". Shared locks allow other processes to read the locked data, but the data cannot be changed or updated while the shared lock is present. So process 178 appears to have issued SELECT queries against the EXT tables, with the default shared lock, that are preventing Dynamics GP from updating those tables.

Fundamentally, this isn't a problem--a query can retrieve data from the EXT tables, complete in a fraction of a second, and then the shared lock is released. But for some reason, the shared lock of process 178 persisted for up to 20 minutes at a time. During that time, Dynamics GP would become unresponsive if a user tried to update any Extender records.

So, now that we know why GP is locking up, the next question is: Why is a mysterious query against the EXT tables locking them for 20 minutes? For that, we go back to the network packet trace, and find packet 33823 documented by the network consultant:

Packet 33823 – hq-app1 issues a SQL command to run a Stored Procedure on the SQL Server

Exec CORP..spREVREC_EXTENDER 'CORP\username', '2013-09-24 03:35:30:35', 'EXT_REVREC'

Aha, now we are getting to the root of the problem. There is a custom stored procedure called "spREVREC_EXTENDER" that is being called--this procedure is what is locking the tables under process 178. We deduce that this custom stored procedure was developed by the revenue recognition software vendor to extract data from Dynamics GP for analysis.

When then jump back to SQL Server Management Studio and pull up that custom stored procedure so that we can view its contents. Except...it is encrypted. D'oh!

Never fear, as I have a tool that can decrypt stored procedures with a single click. After some file transfer acrobatics, we finally get a copy of the customer database onto my development machine so that I can use the decryption tool. And voilà, we can now see the contents of the stored procedure. And it is a doozie. It is 160 lines with 2 derived tables and 12 joins. (Attendees of our SQL Skills for Consultants presentation at the Tech Airlift would know that a good substitute for a derived table is a CTE, and that CTEs can be helpful in improving query performance!)

So now it is crystal clear what is happening. The stored procedure, with its 2 derived tables and 12 joins, is taking forever to run. It was developed against a brand new Dynamics GP company database that only had a few records--of course it ran fine back then. Now that the customer has 100,000 records in the SOP tables and each of the Extender tables, it takes anywhere from 5 to 20 minutes to run. It's a classic example of how it is often impossible to predict future performance of a complex query, and why SQL query optimization is important.

But that's not all. It isn't just that it has 12 joins--it's the particular table that it is joining and the fields it is using in the join. This is just a small snippet of the query--to anyone familiar with Extender tables, it should be fairly obvious that this isn't going to end well.

from EXT00100 e

INNER JOIN EXT00102 d1 ON d1.PT_Window_ID = e.PT_Window_ID and d1.PT_UD_Key = e.PT_UD_Key and d1.PT_UD_Number = 1

INNER JOIN EXT00102 d2 ON d2.PT_Window_ID = e.PT_Window_ID and d2.PT_UD_Key = e.PT_UD_Key and d2.PT_UD_Number = 2

INNER JOIN EXT00103 t1 ON t1.PT_Window_ID = e.PT_Window_ID and t1.PT_UD_Key = e.PT_UD_Key and t1.PT_UD_Number = 3

INNER JOIN EXT00101 s1 ON s1.PT_Window_ID = e.PT_Window_ID and s1.PT_UD_Key = e.PT_UD_Key and s1.PT_UD_Number = 6

INNER JOIN EXT00101 s2 ON s2.PT_Window_ID = e.PT_Window_ID and s2.PT_UD_Key = e.PT_UD_Key and s2.PT_UD_Number = 7

INNER JOIN EXT00103 t2 ON t2.PT_Window_ID = e.PT_Window_ID and t2.PT_UD_Key = e.PT_UD_Key and t2.PT_UD_Number = 4

INNER JOIN EXT40102 m

ON m.PT_Window_ID = t2.PT_Window_ID

and m.Field_Number = t2.PT_UD_Number

and m.LNITMSEQ = t2.TOTAL

INNER JOIN EXT00103 t3 ON t1.PT_Window_ID = e.PT_Window_ID and t3.PT_UD_Key = e.PT_UD_Key and t3.PT_UD_Number = 5

INNER JOIN SOP30200 h

ON h.SOPNUMBE = rtrim(e.Key_Strings_2)

-- and h.SOPTYPE = convert(INT,e.Key_Strings_3)-1

INNER JOIN REV_REC_MASTER a

ON a.SOPNUMBE = h.SOPNUMBE

AND a.ORIG_SOPTYPE = h.SOPTYPE

AND a.LNITMSEQ = convert(INT,e.Key_Strings_1)

AND a.VOID_FL = 0

INNER JOIN REV_REC_RULES b

ON b.SOPNUMBE = h.SOPNUMBE

AND b.ORIG_SOPTYPE = h.SOPTYPE

and b.LNITMSEQ = convert(INT,e.Key_Strings_1)

and b.DELETE_FL = 0

and b.VOID_FL = 0

LEFT OUTER JOIN REV_REC_TRX_RULES r

ON r.SOPNUMBE = a.SOPNUMBE and r.LNITMSEQ = a.LNITMSEQ

I haven't yet dug into the query to determine the specific causes of the performance issues, but one leading candidate is the join with "rtrim(e.Key_Strings_2)". That is a join using a function on a non-indexed field. I sense trouble ahead.

At this point, we have identified the cause of the GP lockups and know that we need to have the rev rec vendor modify the custom stored procedure.

I'm guessing that a quick fix would be to simply add the "WITH (NOLOCK)" table hint to the query. In theory, this should eliminate the shared lock and allow GP to update the EXT tables without issue. But that would just be covering up the underlying problem--the stored procedure would still take 5 to 20 minutes to run. And using NOLOCK on a query that runs that long is probably a bad idea.

So we will likely need to work with the rev rec vendor to completely restructure the query so that it runs much faster. Preferably in just a few seconds.

Despite the work ahead, we felt pretty good by 5pm today, as we were confident that we had found the cause of the client's performance issue, and knew that it could be fixed.

It was a good day.

Postscript: Some of you may know that with GP 2013, eOne completely redesigned the table structures for Extender. The tables have different names, and work differently than the prior Extender tables. So, when this client upgrades to GP 2013, the revenue recognition stored procedure will have to be redesigned yet again to query data from the new 2013 Extender tables.

For more information on different types of SQL locks, here is a good TechNet article on SQL locking:

http://technet.microsoft.com/en-us/library/aa213039(v=sql.80).aspx

Steve Endow is a Dynamics GP Certified Trainer and Dynamics

GP Certified IT Professional in Los Angeles. He is also the owner of

Precipio Services, which provides Dynamics GP integrations, customizations, and

automation solutions.

No comments:

Post a Comment